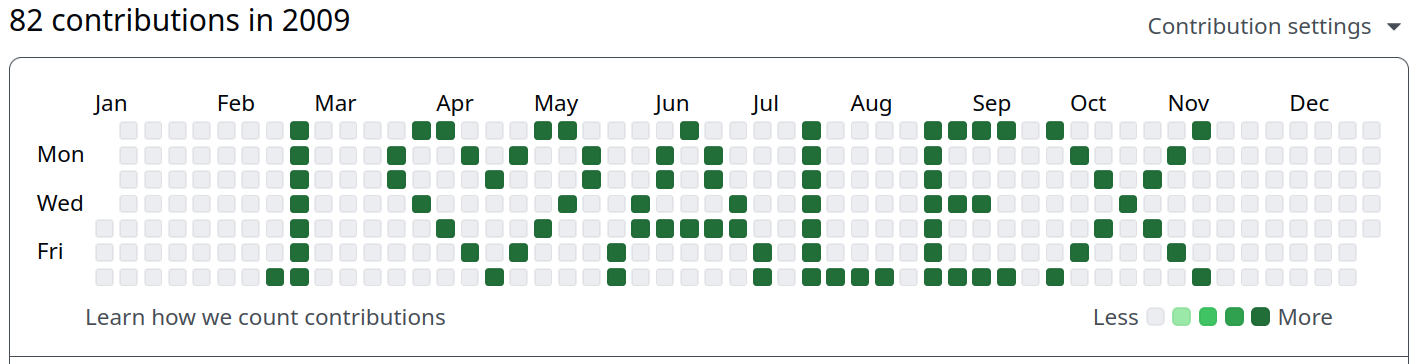

I am a Senior Researcher and Team Lead of the team Metadata Standards and Interoperability at the Data Services for the Social Sciences department at GESIS. Before joining GESIS in April 2025, I worked as a Post-Doc Researcher on the NEWSFLOWS project, which started at the Department of Communication Science at the University of Amsterdam before being moved to VU Amsterdam. Until September 2023, I was based at the Department of Communication Science at Vrije Universiteit Amsterdam, where I worked on the OPTED project and AmCAT (Amsterdam Conent Analyis Toolkit. From 2021 to 2022, I worked as Post-Doc Researcher at the Chair for Digital Democracy at the European New School of Digital Studies (ENS), European University Viadrina Foundation Frankfurt (Oder). In 2021, I passed my PhD in Politics at the University of Glasgow.

In my PhD project, I scrutinised how the media in the UK portrays protest events. Most literature about the topic assumes that the messages of protests are delegitimised by the media through routinised framing, i.e. a focus on disruption by and deviance of protesters. In my project, I collected all newspaper articles published in selected UK newspaper outlets that mention a protest in the UK over a 26 year period (1992-2017; N > 27,000) and analysed the content using an innovative approach to framing analysis that combines best-practice manual coding techniques with supervised machine learning.

After a detour that included a Master on Political Theory, I realised during my Master in Political Communication — which was originally planned as a semester abroad — how much I love working with data. Especially R, the free software environment for statistical computing and graphics, is captivating much of my attention nowadays and has helped me to combine my two most long-standing passions: Political Science and fiddling with computers. I’m using R to do nearly everything (including writing my thesis and this website).

PhD in Politics, 2021

University of Glasgow

MSc Political Communication, 2015

University of Glasgow

MA Political Theory, 2017

Goethe University of Frankfurt/Main & TU Darmstadt

BA Political Science; Economics and Economic Studies in History, 2012

RWTH Aachen-University

rwhatsapp is a small yet robust package that provides some infrastructure to work with WhatsApp text data in R. WhatsApp seems to become increasingly important not just as a messaging service but also as a social network—thanks to its group chat capabilities. This package is intended to make the first step of analysing WhatsApp text data as easy as possible: reading your chat history into R. This should work, no matter which device or locale you used to retrieve the txt or zip file containing your conversations.

paperboy is that the package is a comprehensive collection of webscraping scripts for news media sites. Many data scientists and researchers write their own code when they have to retrieve news media content from websites. At the end of research projects, this code is often collecting digital dust on researchers hard drives instead of being made public for others to employ. paperboy offers writers of webscraping scripts a clear path to publish their code and earn co-authorship on the package. For users, the promise is simple: paperboy delivers news media data from many websites in a consistent format.

wallpapr is a little toy R package to make desktop and phone backgrounds using ggplot2. The design is inspired (aka copied one-to-one) by the beautiful calender wallpapers of Emma. You can check out her wallpapers at: emmastudies.com/tagged/download. With this package you can create your own calender wallpapers using an input image.